The White House recently released a new National AI Legislative Framework for Congress, setting the tone for how AI policy will shape the nation’s jobs, security, and daily life for decades. This framework points toward a smarter path, aiming to keep national goals clear, rules simple, and lets builders move fast while the government focuses on real harms.

Right now, the biggest risk is policy chaos. More than 1,500 state bills now touch AI, and states are still rushing to pass different rules at the same time. That sounds active and responsible, but it can create a confusing map where a startup must follow fifty different rulebooks before it can launch one product. Large firms can absorb those costs. Small teams usually cannot. When that happens, competition drops and the pace of progress slows.

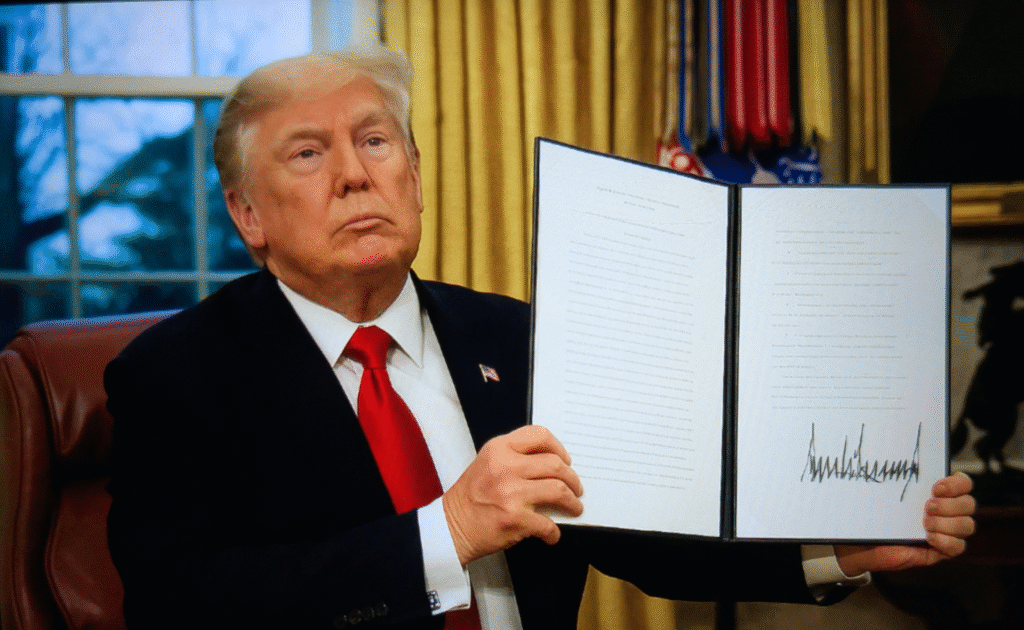

The new White House AI framework offers a better direction. It argues for one light national standard instead of a fragmented patchwork. The framework builds on the White House executive order from December 2025, which called for a minimally burdensome national AI standard instead of fifty discordant state regimes. It also leaves room for state authority in important areas like child safety and state use of technology. A national baseline like this can both protect American citizens and still have room for local problem solving.

The framework also gets specific in useful ways. It pushes Congress to avoid vague liability rules that invite endless lawsuits over lawful speech. It encourages legal clarity around harmful synthetic content while warning against open ended standards. It supports faster permitting so AI infrastructure can expand without shifting unfair costs onto households. It recommends letting courts continue to handle major copyright disputes as case law develops. It says Congress should not build a brand new federal AI super agency and should lean on existing expert agencies where needed.

There is also a strong free speech thread. The framework says Americans should have recourse if federal agencies pressure platforms or developers to shape outputs for political reasons. That is a key principle for open inquiry and public trust. AI should help people complete tasks, not become a tool for official narrative control.

Workforce policy is another bright spot. Instead of panic rules, the framework encourages building AI skills in education and job training so workers can adapt as these tools improve. That approach matches my previous analysis in Understanding AI’s Impact on Work and how AI is Transforming Jobs, Not Destroying Them. The right goal is mobility and opportunity which means helping people move into higher-value work, not trying to freeze the economy in place.

The bottom line is that America does not need a brake pedal disguised as a safety policy. A “try first” model with targeted enforcement like this is the best way to protect consumers, grow opportunity, and keep national advantage in a fast moving world.